- Stanford 2025 AI Index highlights rising inference expenses.

- Inference costs exceed one-time model training for enterprises.

- Training runs projected to surpass $1 billion by 2027.

- Private AI investment reached $150 billion in 2024.

Businesses implementing artificial intelligence (AI) systems are facing the unwinding of the cost structure where the recurrent cost of inference in many applications grows larger than the fixed cost of model training as per recent Data released by Stanford University 2025 AI Index.

Although the focus still was on the eye catching training experiments like the one adopted by Google, the Google Gemini 1.0 Ultra, estimated around $192 million, the actual straining point in financial perspective of most companies is elsewhere. It is inference, the constant, use-based computer activity necessary every time a model produces an AI-generated response, a transaction or a check-up of a medical report.

Training is capital intensive but discontinuous. Inference is changeable and endless. The user prompts each generate new computation, consuming GPUs and other electrical and cooling systems. When ended up in cloud APIs hyperscalers address those costs in per-token pricing model that can increase with usage.

Escalating AI Training Costs Concentrate Market Power

The spending on training keeps picking up. According to Stanford researchers, amortized hardware and energy expenses of frontier AI models are increasing by an average of 2.4 times per year since 2016 and that projections have indicated.

X user Aakash Gupta (@aakashgupta) outlined how the AI industry spent three years obsessing over training costs. "The real money was always in inference. Inference went from one-third of AI compute in 2023 to over half in 2025. Deloitte projects two-thirds by end of 2026. The inference-optimized chip market alone will exceed $50B this year. Inference spending jumped from $9.2B to $20.6B in a single year, and GPT-4's inference bill runs at roughly 15x its training cost."

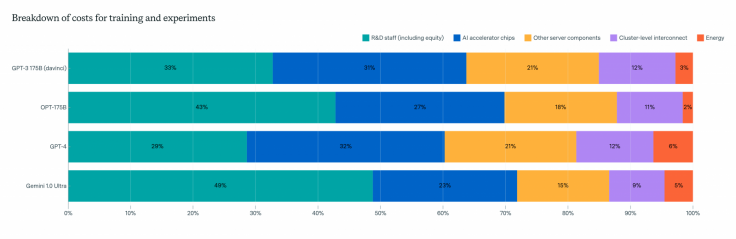

Hardware is the still the largest cost element with prices between 47 percent and 67 percent of the total development costs of models like GPT-4 and Gemini Ultra, staff costs as research and development is between 29 to 49 percent. Such economics consolidate obstacles to entry, restricting the development of the frontier to technologically advanced and well-endowed technology groups and actors supported by the state.

Enterprise Usage Triggers Recurring Budget Pressure

For most enterprises, AI cost inflation becomes real upon scaling the deployment. Not many organizations develop foundational models; they license access in the cloud providers. This economic burden comes out when domestic consumption is added up.

One example cited in industry reporting involved a construction firm whose AI predictive analytics tool initially cost under $200 per month. After broader adoption, monthly expenses rose to $10,000. A subsequent shift to self-hosting reduced the figure to roughly $7,000, but the recurring burden remained significant.

Customer service operations illustrate the same trend. Thousands of chatbot queries per hour translate into millions of processed tokens, with billing directly linked to volume. Unlike capital expenditure, these charges persist as long as the system remains active.

Falling Inference Prices Offset but Do Not Eliminate Risk

There has been measurable relief on pricing. The 2025 AI Index found that inference costs for systems performing at GPT-3.5 levels fell more than 280-fold between November 2022 and October 2024. Hardware efficiency gains and falling per-chip costs have contributed to that decline.

Yet aggregate spending continues to expand. Private AI investment reached 150 billion dollars in 2024, with 33 billion dollars directed toward generative AI. Corporate returns remain measured. Survey data indicate that most companies reporting cost reductions achieved savings of less than 10 percent, while revenue gains typically remained under 5 percent.

Policy frameworks are evolving alongside these economic pressures. Federal legislative activity in the United States has remained limited, even as state-level regulation accelerated in 2024. Europe's AI Act has imposed additional compliance obligations on high-risk systems, adding another layer of cost consideration for multinational operators.

Also read: JP Morgan CEO Jamie Dimon's Warning Resembles His Pre-2008 Crisis Forecast

For corporate finance teams, the operational question is no longer about model size but about workload management, query optimization, deployment architecture and cost governance. If inference prices continue to decline while usage scales responsibly, enterprises may bend the cost curve. If demand outpaces efficiency gains, AI spending will increasingly hinge on strict consumption discipline.