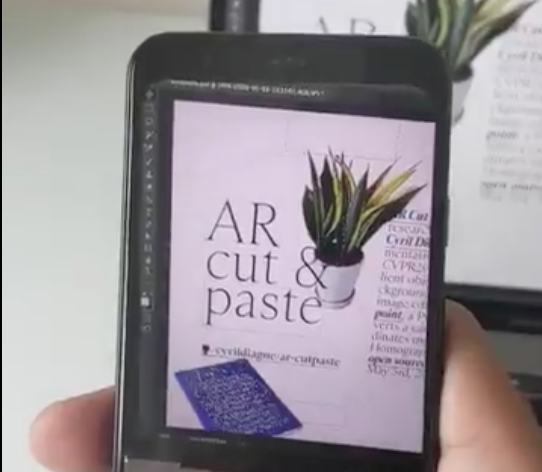

Snapping real-life objects via your smartphone and transferring them straight to your computer's photoshop window might have sounded an insane idea until now. But a new app shows it can indeed be done. The augmented reality-based application suite called AR Cut & Paste can let you do so by pointing your smartphone camera to the desired object.

Once it captures the subject and chucks off the extra background element, all you need to do is to point the camera at your computer screen to paste the object in its desired place. Cyril Diagne, the inventor of the service, has posted a video on Reddit to prove his claim. He has also left the detailed source codes of the service in Github to back his claim.

Diagne, an artist, designer and programmer, is connected to Google's Cultural Research Laboratory and also serves as the director of media and interaction design at the Lausanne University of Art and Design.

How to use AR Cut and Paste

To use the service, you should install the tool on your smartphone. Later, all you need to do is focus on the desired object via your device camera and shoot the image. The application later shifts the exact image to the computer display to make the job done for you.

For now, the application takes around two and a half seconds to capture the object on the smartphone, and for shifting the image, the process takes around four more seconds. If the time sounds rather slow to you, the application is still in its infancy. But the developer has announced rollout of another AI + UX prototype of it by sometime next week.

How to install

The application utilises both augmented reality and machine learning technologies to make it happen. The process involves three modules' work on the smartphone, the local storage server and the target device. Once the smartphone captures the image, the smartphone application uploads it to the storage up in the cloud. Once the user aims their smartphone on display, the phone camera finds the position on-screen by using another service called screen point.

"For now, the salience detection and background removal are delegated to an external service," the developer said. "It would be a lot simpler to use something like DeepLap directly within the mobile app. But that hasn't been implemented in this repo yet."

To install the service on your computer, all you need is to have NPM install service. NPM or Node Package Manager is a package manager for the node javascript platform to publish, install, and develop node programs.

You should configure the local server on your device with the following command.

virtualenv venvsource venv / bin / activate

pip install -r requirements.txt

Later, you need to activate the remote connection facility of Photoshop on your device with the following set of commands-

python src / main.py

basnet_service_ip = "http: //A.A.A.A"

basnet_service_host = "basnet-http.example.com"

photoshop_password

The application utilises the BASNet or Boundary-aware Salient Object Detection to remove the background of the subject image.

Alongside, the application uses OpenCV SIFT to track the coordinates of the phone is aligned on the computer display. Find the tiny python package here.