The researchers at the Facility of Informatics, Mathematics and Computer Science at the Higher School of Economics have developed an automated system which could identify people's emotions through their voice.

The research which was unveiled at a major international conference, Neuroinformatics – 2017, presented the smart system which surpasses the traditional algorithm and allows better interaction between people and computers.

The new technology could recognize eight different human emotions including neutral, calm, happy, sad, angry, scared, disgusted, and surprised. The researchers said that the computer has identified the emotions in 70% of cases which were put before it.

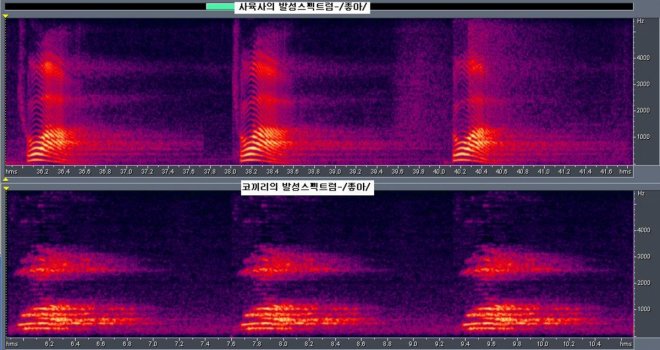

A deep learning convolutional neural network (CNN), which uses artificial neural networks to analyze visual imagery, was used to transform the sounds to spectrogram images. It allowed scientists to work with techniques which identified the images and their corresponding emotions.

However, the programme could not always distinguish between emotions like happiness and surprise, fear and sadness, and had also interpreted surprise as disgust.

Computers were used for a long time to convert speech to text. But they couldn't identify the emotional contents in sounds and had misinterpreted sentences or words differently.

The idea of recognition of emotions through sound was earlier presented at the 168th Meeting of the Acoustical Society of America (ASA) in 2014 at Indiana, USA. Valarie Freeman, a Ph.D. candidate in the Department of Linguistics at the University of Washington (UW) and his colleagues described the Automatic Tagging and Recognition of Stance (ATAROS) project which aimed to recognize the various stances, opinions, and attitudes that can be revealed by human speech.

Valarie Freeman stated "What is it about the way we talk that makes our attitude clear while speaking the words, but not necessarily when we type the same thing? How do people manage to send different messages while using the same words? These are the types of questions that ATAROS project seeks to answer."

The research on Automatic Tagging and Recognition of Stance (ATAROS) project is still ongoing.